How to Create Adaptive Music That Changes With Listener Mood

How to Create Adaptive Music That Changes With Listener Mood

In 2026, adaptive music has evolved from an experimental concept into a mainstream tool for creators in gaming, wellness, advertising, and interactive experiences. Whether you’re a producer building immersive soundtracks or an AI developer designing emotionally responsive applications, understanding how to create adaptive music — music that shifts based on listener mood or environmental context — can transform your output and engagement.

What is Adaptive Music and Why Does It Matter in 2026?

Adaptive music refers to compositions that change dynamically based on inputs such as user emotion, behavior, or contextual data. In gaming, this might mean a soundtrack that intensifies during battle scenes and calms during exploration. In wellness, it could adapt to a meditator’s heartbeat or breathing rhythm. By 2026, advancements in dynamic music AI and emotion recognition have made such experiences accessible to independent creators and small studios.

For artists, adaptive music opens new creative frontiers — one where the sound isn’t static but fluid, continuously reacting to the listener. This approach increases engagement, retention, and emotional impact, especially in apps and platforms focused on personalization.

How Does Mood Based Music Work?

Mood based music systems rely on data-driven interaction. The process typically involves:

- Mood Detection: Using sensors, behavior analysis, or direct user input to capture emotional states — happy, calm, focused, or stressed.

- Adaptive Response: The system selects or generates music fitting that emotional parameter.

- Real-Time Adjustment: Although not all systems react instantly, most modern workflows adjust music playback asynchronously based on changes detected.

By 2026, AI models can analyze textual prompts and sensor data to tailor music that feels emotionally connected to its listener. This innovation is redefining everything from therapeutic sound design to interactive storytelling. For a deeper dive into creative flexibility, explore AI Music for Multi-Mode Audio Devices and Exploring the Depths of Creative Range in 2026 - Soundverse AI.

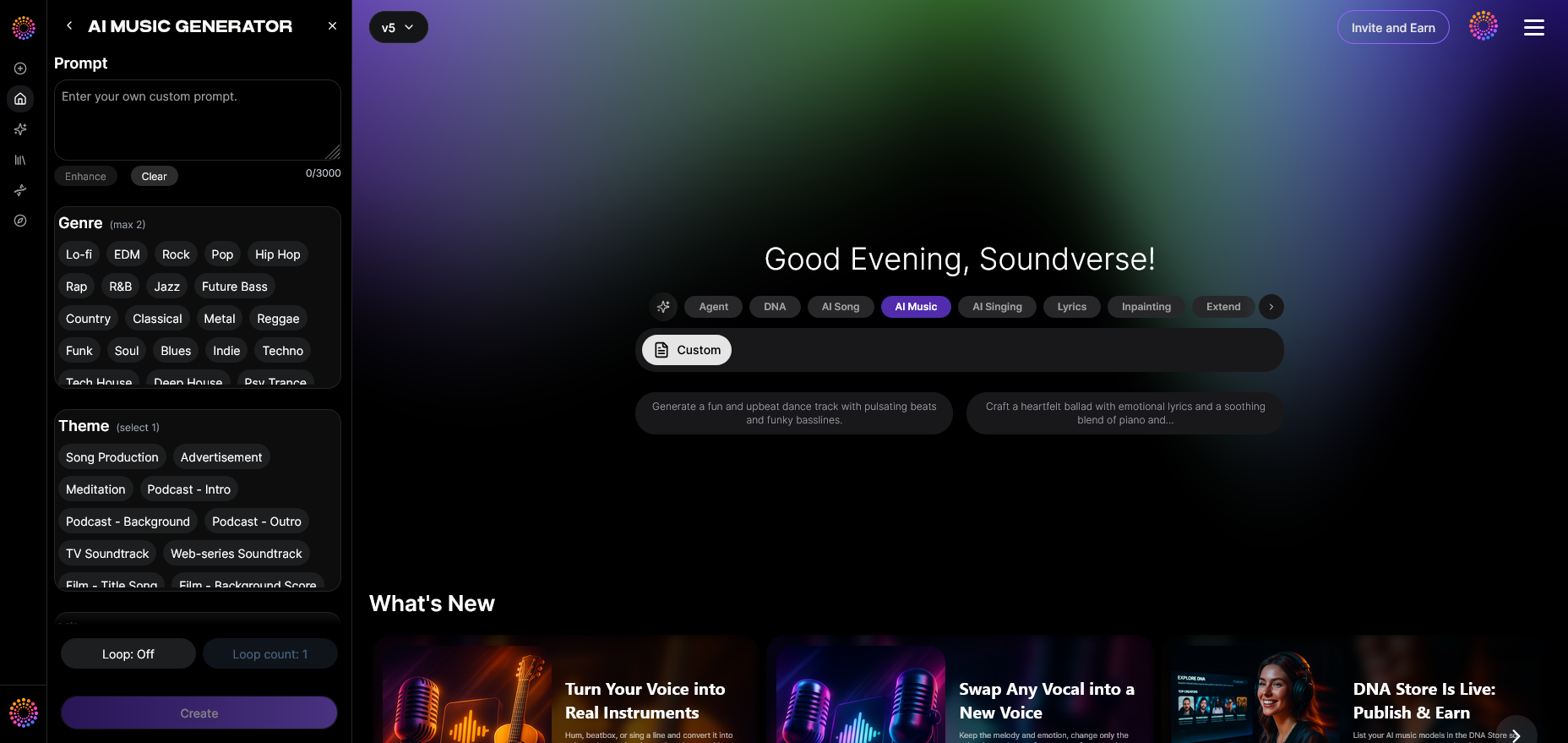

How to Make Adaptive Music with Soundverse AI Music Generator

To create adaptive music for any application, Soundverse offers an intuitive yet powerful solution. The AI Music Generator is designed for creating original, fully produced instrumental compositions directly from descriptive text prompts. This tool specializes in generating background tracks, rhythmic beats, and ambient soundscapes without vocals — ideal for dynamic experiences.

Key Capabilities That Support Adaptive Music Creation:

- Text-to-Music Generation: Describe emotional states, environments, or moods (e.g., “relaxing ambient for focus” or “tension-building cinematic score”).

- Loop Mode: Generate seamless looping sections for real-time adaptability in games or meditation apps.

- Detailed Genre, Mood, and Instrument Control: Choose specific parameters to match emotional profiles and transitions.

- Multiple Model Options: Switch between V4 and V5 engines based on the desired music complexity or texture.

Soundverse’s ecosystem doesn’t just generate sound — it connects creators to advanced tools:

- Similar Music Generator: Perfect for syncing adaptive music with a director-approved reference or mood track.

- Soundverse DNA: Enables adaptive consistency by maintaining sonic identity across all generated pieces — ensuring ethical, licensed audio creation.

- Agent: The intelligent conversational assistant that interprets natural language tasks and organizes workflows between tools.

With these tools combined, adaptive music can become practical and scalable for developers and composers alike.

For hands-on learning, watch the Soundverse Tutorial Series - 9. How to Make Music or explore our guide on creating Deep House music for detailed use cases.

Step 1: Feature Overview

Access the AI Music Generator from the Soundverse main menu. It’s located under Music Creation tools and forms the foundation for adaptive sound design workflows.

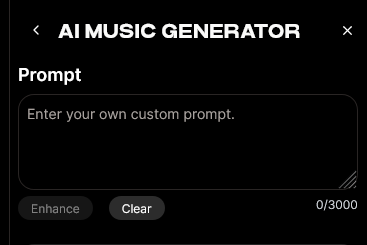

Step 2: Description Input

Enter a detailed text description capturing the desired mood and setting. For example, “serene ambient background that transitions to uplifting tones when user becomes active.” The AI interprets this and tailors the generation to the emotional context.

Step 3: Style & Duration

Select your preferred genre (ambient, electronic, orchestral) and set the track duration. Loop Mode can also be activated here, enabling continuously adaptable playback segments.

Step 4: Generation

Run the generation process. Within seconds, Soundverse creates an original instrumental piece aligned with your description. Because the system works asynchronously, tracks are rendered and made available after processing.

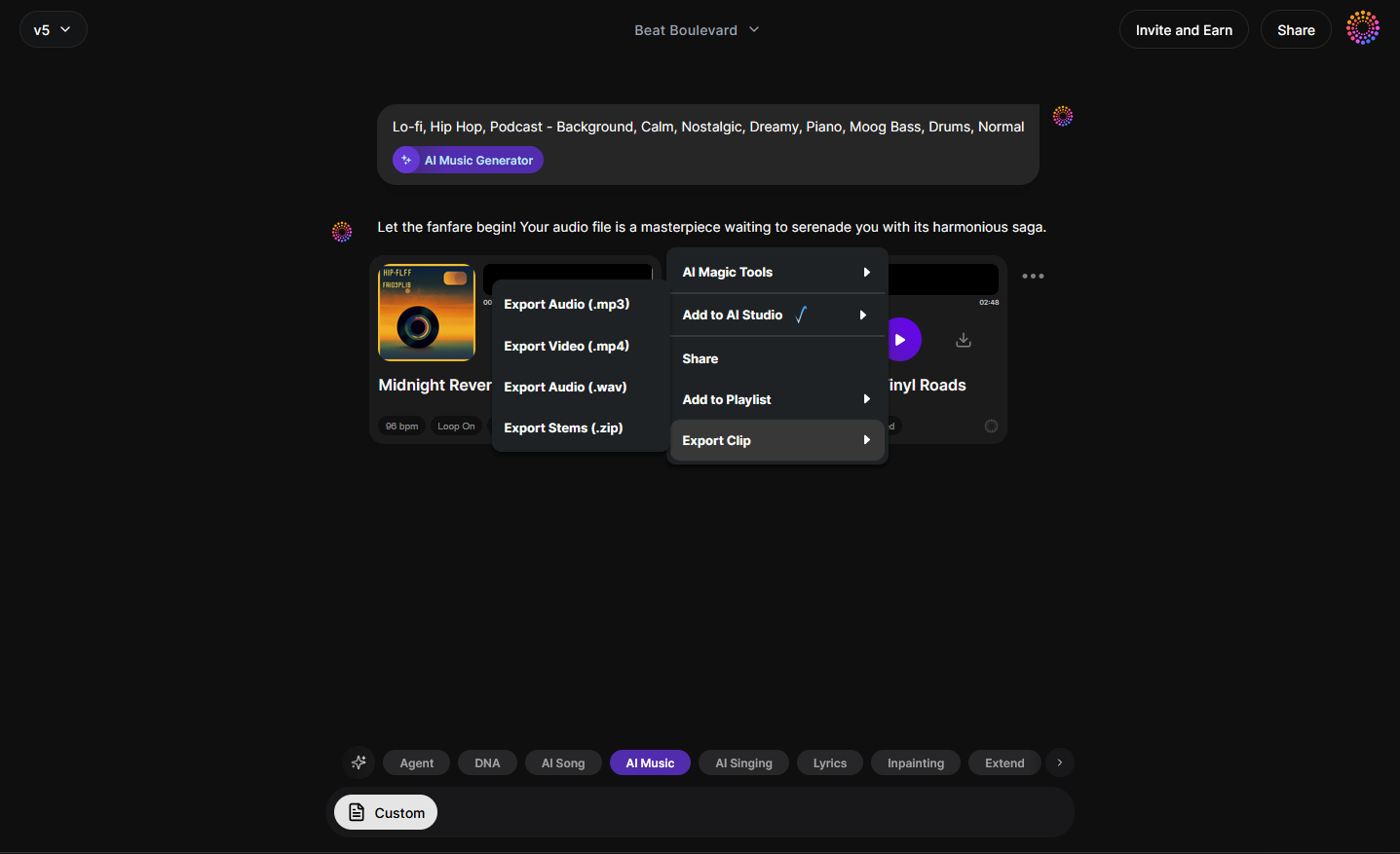

Step 5: Export Options

Once finalized, download your adaptive-ready instrumental. You can integrate these files into interactive media engines or use them with emotion-tracking APIs for dynamic applications.

How to Connect Adaptive Music to Dynamic Systems

Creating adaptive music is only half the story — connecting it to user experiences completes the workflow. In interactive media or games, developers typically:

- Use emotional metrics or game state triggers to switch between music files.

- Employ looping transitions generated by the AI to ensure smooth changeovers.

- Integrate APIs to modulate volume, tempo, or instrumentation dynamically.

This architecture delivers an immersive result where music becomes a co-creator of emotion. You can explore advanced developments in interactive audio in related Soundverse resources such as AI ranks Soundverse’s AI Singer #1 – The Ultimate AI Singing Platform Dominating 2025 and Soundverse AI Magic Tools: Create Content Quickly With AI.

Pro Tips for Producers and Developers

- Start Simple: Design two core emotional states first, such as “calm” and “active.” Use Soundverse’s loopable outputs for seamless transitions.

- Leverage Genre Contrast: Pair distinct genres — ambient for relaxation, electronic for motivation — to make emotional changes more perceptible.

- Ensure Ethical Sound Creation: With Soundverse DNA, consistency and copyright safety are built in.

- Test Continuity: Check how generated loops feel when layered in real-world contexts. Smooth transitions prevent jarring experiences.

For creators needing additional inspiration, explore mood-driven composition examples in Generate AI Music with Soundverse Text-to-Music or compare approaches via Best Suno Alternative – Soundverse AI Free Sign-Up.

Why Adaptive Music Will Be Core to Audio Innovation in 2026

Several trends are converging toward adaptive sound design this year:

- Emotion Recognition APIs: Modern wearables and devices can map user mood with surprising accuracy.

- Dynamic AI Engines: Text-to-music models now generate emotional phases and transitions automatically.

- Interactive Audio Interfaces: Systems can modulate loops based on subtle cues, creating living soundscapes.

- Personalization Demand: Creators across streaming, gaming, and wellness industries are prioritizing user-tailored immersion.

As consumers increasingly expect media that feels alive and personal, adaptive audio will become a defining quality of 2026-era content production.

Start Creating Adaptive Music with Soundverse

Use Soundverse’s powerful AI tools to compose tracks that respond to listener mood and energy. Instantly transform your creative workflow and bring emotion-driven soundscapes to life.

Try Soundverse Free

Related Articles

- How AI-Generated Music Is Transforming the Music Industry: Discover how AI is reshaping how music is composed, produced, and experienced around the world.

- Soundverse AI Magic Tools: Create Content Quickly with AI: Learn how Soundverse’s AI-driven tools help creators produce professional-quality music effortlessly.

- Generate AI Music with Soundverse Text-to-Music: Turn your ideas and emotions into adaptive tracks with Soundverse’s text-to-music technology.

- AI Music Generator and Human Composers: A Future Together: Explore how AI and human creativity can work hand in hand to build emotionally adaptive compositions.

Here's how to make AI Music with Soundverse

Video Guide

Here’s another long walkthrough of how to use Soundverse AI.

Text Guide

- To know more about AI Magic Tools, check here.

- To know more about Soundverse Assistant, check here.

- To know more about Arrangement Studio, check here.

Soundverse is an AI Assistant that allows content creators and music makers to create original content in a flash using Generative AI. With the help of Soundverse Assistant and AI Magic Tools, our users get an unfair advantage over other creators to create audio and music content quickly, easily and cheaply. Soundverse Assistant is your ultimate music companion. You simply speak to the assistant to get your stuff done. The more you speak to it, the more it starts understanding you and your goals. AI Magic Tools help convert your creative dreams into tangible music and audio. Use AI Magic Tools such as text to music, stem separation, or lyrics generation to realise your content dreams faster. Soundverse is here to take music production to the next level. We're not just a digital audio workstation (DAW) competing with Ableton or Logic, we're building a completely new paradigm of easy and conversational content creation.

TikTok: https://www.tiktok.com/@soundverse.ai

Twitter: https://twitter.com/soundverse_ai

Instagram: https://www.instagram.com/soundverse.ai

LinkedIn: https://www.linkedin.com/company/soundverseai

Youtube: https://www.youtube.com/@SoundverseAI

Facebook: https://www.facebook.com/profile.php?id=100095674445607

Join Soundverse for Free and make Viral AI Music

We are constantly building more product experiences. Keep checking our Blog to stay updated about them!

We are constantly building more product experiences. Keep checking our Blog to stay updated about them!