How to Design Music for Extended Reality (XR) in 2026

How to Design Music for Extended Reality (XR)

In 2026, extended reality (XR) has become a central creative platform for digital entertainment, social interaction, and immersive storytelling. As VR headsets, AR glasses, and mixed-reality experiences continue to evolve, audio now plays as vital a role as visuals. For creators, learning how to design music for extended reality is essential to crafting believable and emotionally resonant virtual worlds.

Why Music Design Matters in Extended Reality (XR)

Music in XR is not just a background element—it’s part of the user’s environment. Whether building a virtual museum, a VR concert, an AR shopping experience, or a fully interactive narrative, immersive music helps orient users, define space, and create emotional continuity.

Unlike traditional film or game soundtracks that are mixed for stereo or surround systems, extended reality audio is spatially dynamic. This means that as a user turns their head or moves within a virtual environment, sound sources respond accordingly. Music must therefore be composed and mixed to feel natural in all directions—something we call spatial sound design.

In XR, sound designers must consider positioning, movement, and interaction. Sound objects can be attached to virtual elements—such as a waterfall or glowing crystal—or they can act as ambient beds that adapt based on what users interact with. This creates a deeply personalized experience.

What Are the Key Components of Extended Reality Audio?

To properly design music for extended reality, you must understand the main components that define XR sound.

1. Spatial Audio

Spatial audio simulates how sound is heard in the real world, using directional cues and distance to replicate three-dimensional space. Unlike standard left-right stereo panning, it uses advanced algorithms (such as binaural and ambisonic rendering) to create the full illusion of being surrounded by sound. Platforms like the 6th International Conference on Audio for Virtual and Augmented Reality and Immersive Games highlight how such tools are shaping the next stage of XR experiences.

2. Interactive Layers

In XR, music can evolve depending on the user’s actions. Interactive layers allow developers to fade in or out particular elements as the user moves or triggers certain events. This creates adaptive virtual reality audio that responds in real time.

3. Environmental Integration

The best XR soundtracks are designed to blend with the virtual environment’s physics and style. The reverberation inside a cave, or how low frequencies carry across a digital forest, should feel realistic and emotionally consistent.

4. Looping and Continuity

Unlike a film with fixed start and end points, XR experiences may be open-ended. Music loops must therefore be carefully designed so that transitions are seamless. This prevents immersion from breaking and allows for continuity, even during extended user sessions.

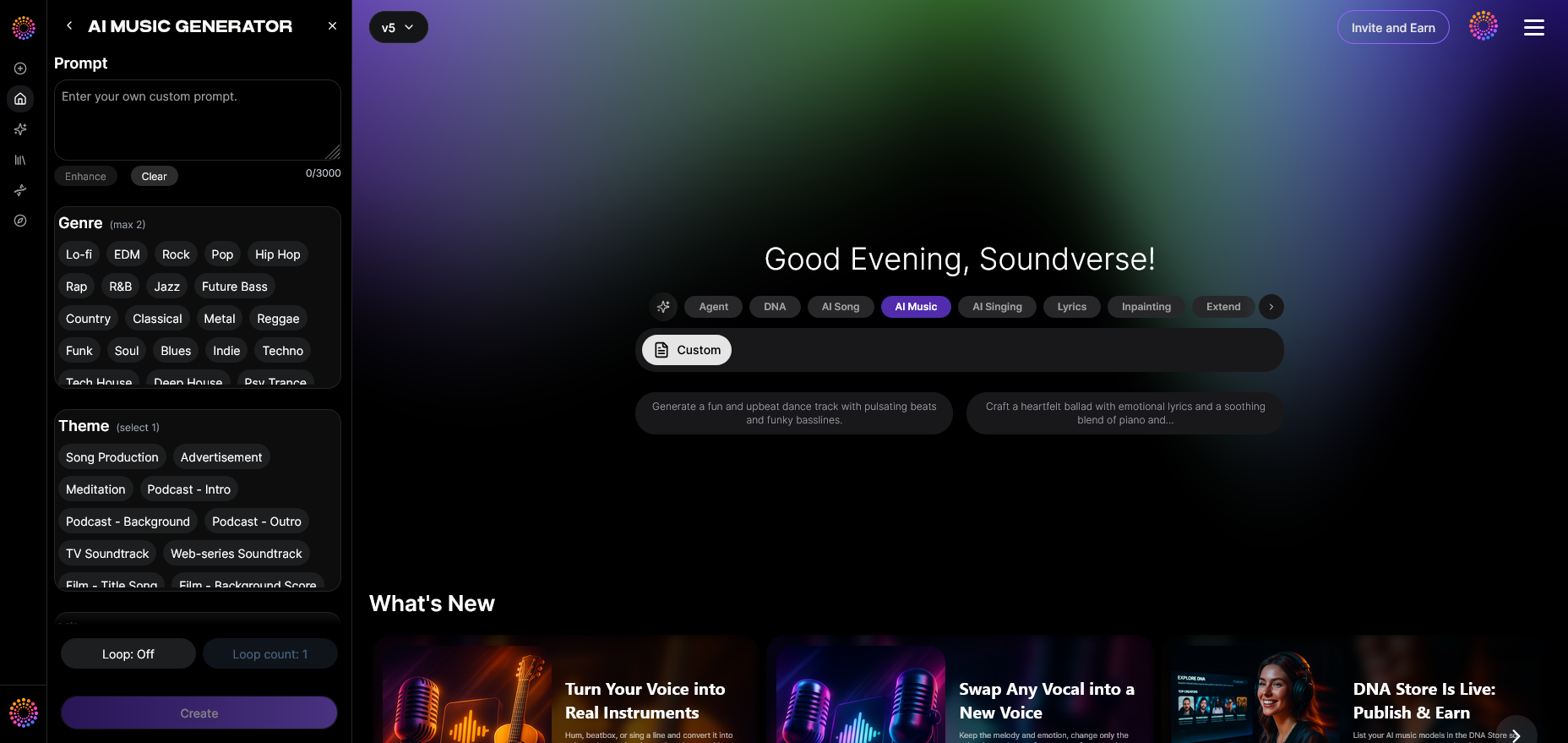

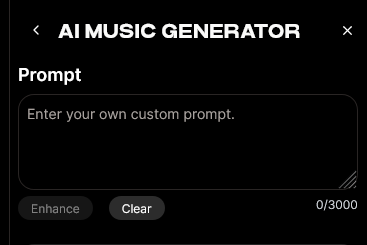

How to Make Music for Extended Reality with Soundverse AI Music Generator

Soundverse’s AI Music Generator is an innovative tool that helps producers and sound designers quickly create professional-grade instrumental soundscapes tailored for XR projects.

The AI Music Generator can produce fully arranged instrumental tracks from simple text prompts, focusing on mood, genre, and emotional tone. Each track is created without vocals, making them ideal for immersive or interactive environments. Its Loop Mode ensures perfect seamless repetition—critical for open-ended virtual experiences.

Key Capabilities

- Text-to-Music Generation: Describe your world, and Soundverse turns that text into a unique, copyright-safe soundtrack.

- Loop Mode: Generates music that can continuously play without audible seams.

- Genre & Mood Control: Choose from ambient, electronic, cinematic, or experimental tones depending on your environment.

- Model Options (V4 and V5): Different AI models provide varying sonic textures for more precise customization.

For XR creators, these capabilities make Soundverse a powerful partner, combining speed with creative depth. As outlined in how AI-generated music is transforming the music industry, automated tools have matured from basic loops to fully dynamic composition systems.

Step 1: Feature Overview

Access the AI Music Generator from the Soundverse main menu. This is your starting point for creating custom audio suited to any XR environment.

Step 2: Description Input

Enter a detailed text description that reflects your intended scene or atmosphere. Example prompts might include: “An ambient drone for a futuristic city” or “calming natural tones for an interactive forest in AR.”

Step 3: Style & Duration

Select your music style and desired track length. Both affect how the track will match the pacing of your XR experience. For looping background audio, choose shorter durations; for cinematic storytelling, opt for longer compositions.

Step 4: Generation

Click ‘Generate’ and allow the AI to process your description. Within moments, you’ll have a fully arranged instrumental track inspired by your text input. This workflow supports experimentation—try multiple versions using different moods or instruments.

For a deeper dive, watch our guide on how to make music and Deep House tutorial on the Soundverse YouTube channel.

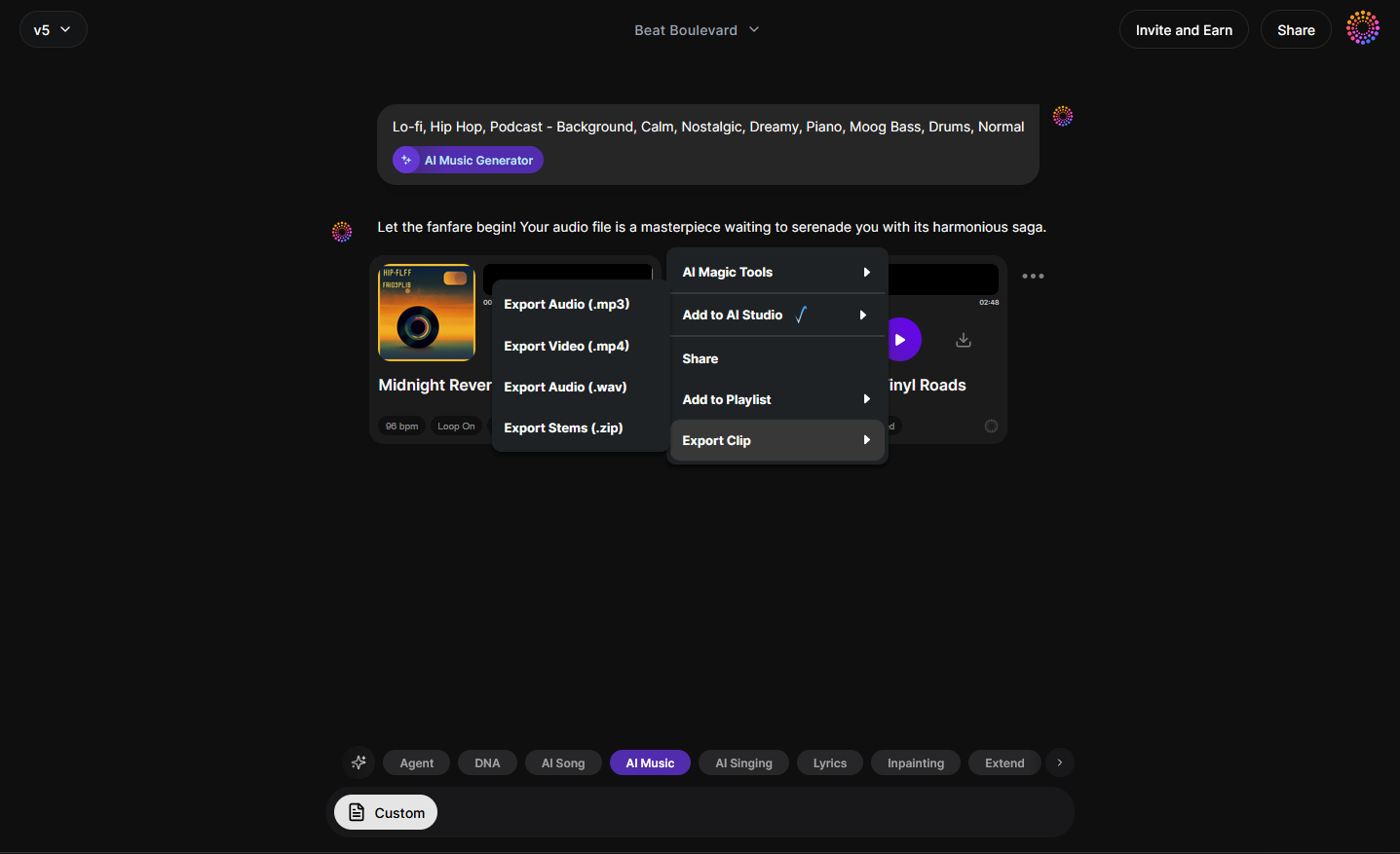

Step 5: Export Options

Download the music and integrate it directly into your XR project. Soundverse exports your track in high-quality audio formats suitable for spatialization in Unity, Unreal Engine, or WebXR platforms.

By following these steps, sound designers can streamline their production process and ensure consistency across extended experiences. If you’re already exploring genre creation, learn more from How to Create EDM with Soundverse AI or How to Create Jazz Music with Soundverse AI.

What Makes an Effective XR Soundtrack in 2026?

An effective XR soundtrack in 2026 prioritizes immersion, emotional clarity, and technical compatibility. With the rise of lightweight head-mounted displays and advanced spatial mixing engines, users expect cinematic-quality sound everywhere. As event technology trends evolve, immersive sound will define engagement at both digital and physical experiences.

Sound designers should focus on:

- Subtle Ambient Beds: Use low-frequency textures and slowly evolving harmonics to avoid distraction.

- Directional Anchors: Place soft melodies or rhythmic cues that orient the listener’s sense of space.

- Dynamic Systems: Layer your tracks to fade or change intensity based on user proximity.

- Adaptive Tempo: If the XR experience involves user-driven motion, design the tempo to adjust dynamically.

As highlighted in Music Industry Trends, adaptive audio is fast becoming the new standard, making these principles essential for all producers entering immersive sectors. For additional context, the XR Initiative Workshops explore how extended reality can be leveraged for social good and creative sound engagement.

Pro Tips for Mastering Spatial Sound Design in XR

- Render in Ambisonic Format: Ensure compatibility with 360-degree playback systems by exporting your final stems in higher-order ambisonic formats.

- Avoid Extreme Frequencies: High-pitched or overly low sounds can break immersion through distortion or discomfort.

- Leverage AI Tools for Iteration: Platforms like Soundverse’s AI Magic Tools allow faster prototyping without losing creative control.

- Collaborate with Developers: Audio integration in XR demands communication between sound designers and programmers for proper spatial mapping.

- Test in Device Context: Always test your soundtrack using actual XR gear to confirm that transitions, reverbs, and directional effects feel authentic.

Start Designing Immersive XR Soundscapes with Soundverse

Bring your extended reality projects to life with AI-generated, customizable sound design tailored for interactive and virtual worlds. Experience professional-quality audio creation in minutes—no studio required.

Create XR Music Now

Related Articles

- The Role of AI Music in Film and Television: Explore how AI-generated scores are transforming storytelling across screens, from cinematic epics to streaming originals.

- How AI-Generated Music Is Transforming the Music Industry: Discover how artificial intelligence is redefining composition, collaboration, and the future of music production.

- Soundverse AI Revolutionizing Music Creation for New Age Content Creators: Uncover how Soundverse makes professional music creation accessible for creators in gaming, film, and virtual media.

- AI Music Generator and Human Composers: A Future Together: Learn how AI tools and human creativity are merging to shape the next era of composition and interactive sound design.

Here's how to make AI Music with Soundverse

Video Guide

Here’s another long walkthrough of how to use Soundverse AI.

Text Guide

- To know more about AI Magic Tools, check here.

- To know more about Soundverse Assistant, check here.

- To know more about Arrangement Studio, check here.

Soundverse is an AI Assistant that allows content creators and music makers to create original content in a flash using Generative AI. With the help of Soundverse Assistant and AI Magic Tools, our users get an unfair advantage over other creators to create audio and music content quickly, easily and cheaply. Soundverse Assistant is your ultimate music companion. You simply speak to the assistant to get your stuff done. The more you speak to it, the more it starts understanding you and your goals. AI Magic Tools help convert your creative dreams into tangible music and audio. Use AI Magic Tools such as text to music, stem separation, or lyrics generation to realise your content dreams faster. Soundverse is here to take music production to the next level. We're not just a digital audio workstation (DAW) competing with Ableton or Logic, we're building a completely new paradigm of easy and conversational content creation.

TikTok: https://www.tiktok.com/@soundverse.ai Twitter: https://twitter.com/soundverse_ai Instagram: https://www.instagram.com/soundverse.ai LinkedIn: https://www.linkedin.com/company/soundverseai Youtube: https://www.youtube.com/@SoundverseAI Facebook: https://www.facebook.com/profile.php?id=100095674445607

Join Soundverse for Free and make Viral AI Music

We are constantly building more product experiences. Keep checking our Blog to stay updated about them!