Music as a Multi-Sensory Experience: 2026 Trends

Music as a Multi-Sensory Experience: 2026 Trends

Introduction

The year 2026 has solidified the transformation of music from a purely auditory experience into a multi-sensory journey. As immersive music and experiential audio technologies advance, listeners are no longer just hearing sound—they are feeling, seeing, and even interacting with it. This revolution has been shaped by innovations in haptic technology, spatial sound design, extended reality (XR), and AI-driven composition tools like Soundverse DNA. As artists, producers, and technologists explore new frontiers of sensory music, the boundaries between art, science, and technology continue to blur.

In this article, we’ll explore the top multi-sensory music trends of 2026, how they’re reshaping the industry, and how Soundverse DNA empowers creators to design the next era of sensory soundscapes.

What Does 'Multi-Sensory Music' Mean in 2026?

"Multi-sensory music" refers to experiences that engage more than hearing. In 2026, audiences expect music that resonates across sight, touch, and even smell—thanks to connected environments and synchronized sensory devices. Venues and streaming platforms are integrating sensory cues to heighten emotional responses, while artists are experimenting with technologies that translate melodies into visual and tactile feedback.

This approach reflects an evolution from earlier immersive music trends of 2024 and 2025, when VR concerts and binaural sound were cutting-edge. Today, those features are standard. The next step is full sensory immersion—music you can feel in your skin and see in color.

What Are the Leading Multi-Sensory Music Trends in 2026?

1. Haptic and Vibration-Based Listening

Wearable technology has turned touch into a major player in experiential audio. Smart vests and wristbands now sync vibrations with bass frequencies, providing listeners with physical sensations that match the rhythm. This adds a kinesthetic layer that converts passive listening into active participation. Projects like Music Not Impossible and oMoo’s haptic innovation exemplify this transformation, making sound literally feelable.

2. Spatial Audio and Adaptive Soundscapes

Spatial audio has evolved into adaptive soundscapes—sound environments that change in response to user movement or emotion. Using biometric feedback, systems adjust tempo, pitch, or even harmony to match the listener's physiological signals. CES 2026 showcased MIDI-based haptic gear that dynamically interprets gesture input, hinting at a responsive, real-time relationship between body and sound.

3. Visual Music Translation and Synesthetic Design

Visualizers have matured into synesthetic design systems that convert frequencies and tonalities into AI-driven visuals. Concerts and art installations use projection mapping to represent music as living, evolving forms. Tools like these have influenced game design, interactive museums, and mindfulness experiences.

4. Scent-Based Performances

It might sound futuristic, but 2026 has seen major festivals experiment with aroma-coded soundscapes. Tiny diffusers sync with the show’s emotional arc, releasing corresponding scents that enhance memory retention and emotional immersion.

5. Extended Reality (XR) Concerts

Virtual and augmented reality are now combined into XR environments that blend the physical and digital. Fans can attend concerts as avatars, feeling stage vibrations through motion chairs and interacting with performers’ AI personas. These hybrid events bridge live venues and virtual worlds.

6. AI-Generated Immersive Composition

AI is now integral to creating sensory music. Beyond composition, AI tools are used to craft entire experiences—composing for spatial and physical dimensions simultaneously. Platforms like Soundverse DNA allow creators to generate music that retains an artist’s authentic sonic identity while scaling it into multi-sensory environments. For a deeper dive, watch our Soundverse Tutorial Series on making Deep House music or How to Make Music for hands-on insights.

7. Neural Audio Interfaces

Brain-computer interface innovations have entered the creative process. Artists and producers experiment with EEG-based tools that map brainwaves into real-time atmospheric musical adjustments. This technology personalizes listening to an unparalleled degree, transforming music into bioreactive art.

8. Immersive Retail Sound Branding

Retail and hospitality brands use multi-sensory soundscapes for immersive brand storytelling. With advancements in experiential audio, stores now match their visual identity with ambient sound, scent, and even micro-haptic floor vibrations. This approach creates stronger emotional associations and memory recall.

9. Collaborative Sensory Experiences

Cloud-based creation spaces allow musicians, developers, and experiential designers to co-create multi-sensory content remotely. These collaborations merge various expertise—sound engineering, visual design, olfactory science—into one cohesive artform.

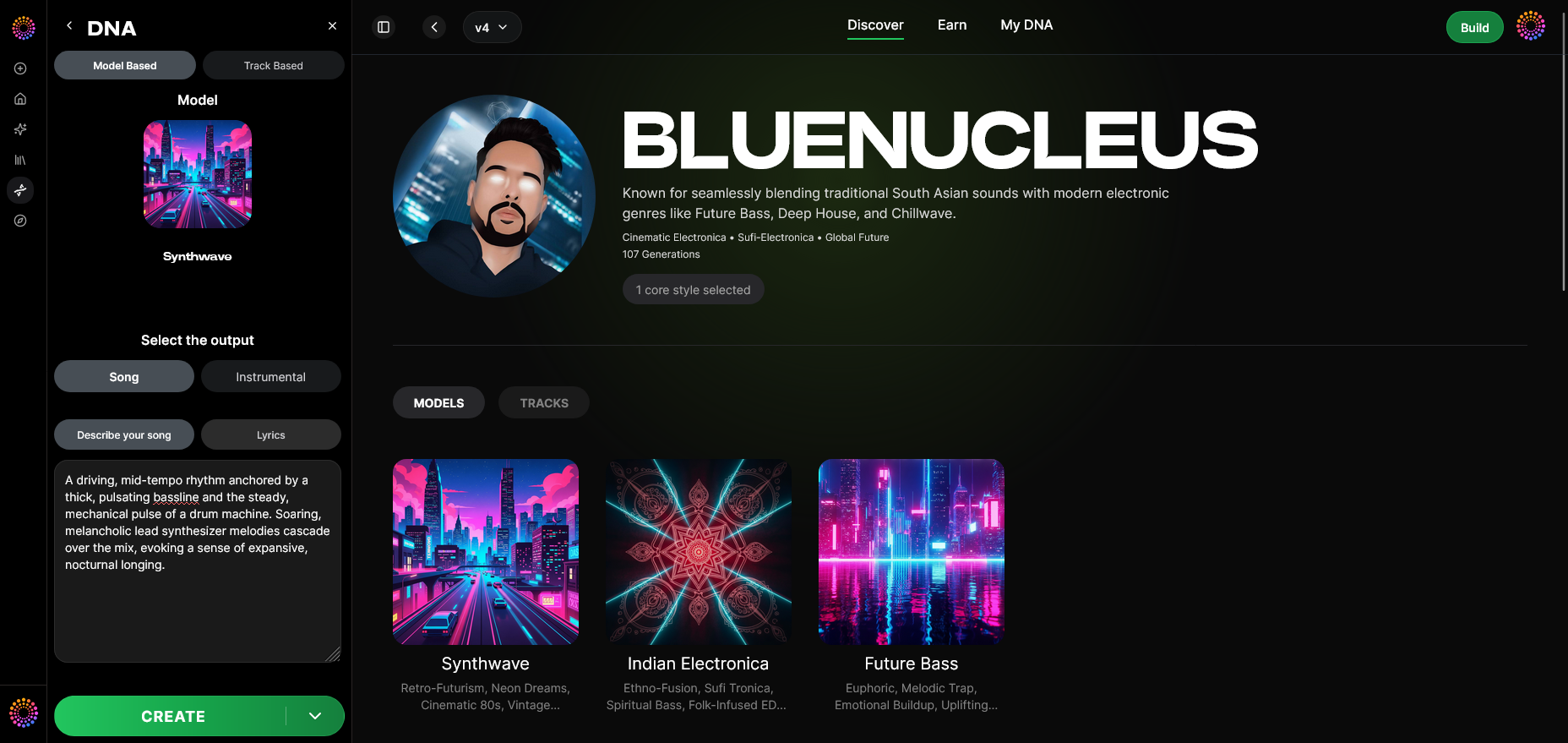

10. Sonic Identity and DNA Modeling

Sound DNA is the concept defining artist identity as data. Innovative solutions analyze the core traits of an artist’s sound—their rhythm patterns, timbral qualities, and tonal biases—enabling AI systems to replicate or extend that style ethically. Soundverse DNA leads this space by providing a licensed ecosystem for creative expansion.

Why Are Multi-Sensory Music Experiences in Demand?

Three converging factors have led to the demand for sensory music in 2026:

- Post-Digital Fatigue: Audiences want more meaningful, embodied experiences beyond screens.

- Technological Ecosystem Maturity: Wearables, spatial computing devices, and wireless haptics are now affordable and interoperable.

- Cultural Shift Toward Interactivity: Consumers expect participation, not just observation—especially in entertainment.

For professionals in audio technology, sound design, and experiential marketing, these trends highlight massive new revenue channels—from haptic album releases to personalized bioreactive playlists.

How Does Experiential Audio Impact Creative Expression?

The creative possibilities opened by immersive music extend to composition, storytelling, and emotional connection. Musicians now treat every note as a multisensory signal. Producers consider how lighting, environment, and even scent align with sonic narratives. Game composers design cues that trigger multidimensional sensations, while film composers use psychoacoustic mapping for sensory synchronization.

For example, as explained in Soundverse’s article on AI music in the USA, composers are increasingly integrating AI tools that analyze emotional intent, allowing synthesis across sound and sensation. Multi-sensory creation is becoming an expected skill—not an experiment.

How Soundverse DNA Powers Multi-Sensory Music in 2026

Soundverse DNA represents a milestone in the ethical and creative application of AI within today's music technology trends. As an artist-trained AI system, it learns from licensed catalogs to model the distinct sonic identity—called “DNA”—of each artist. Unlike generic music generators, it allows artists to monetize their sound style while giving users legal access to create within that sonic spectrum.

Key Features and Capabilities

- Full DNA & Voice DNA: Capture an artist’s full production essence or just their vocal timbre and phrasing style. Perfect for multi-sensory compositions requiring signature sound continuity.

- DNA Marketplace: Enables creators to license their sonic styles to others, fostering a shared ecosystem of sound evolution.

- Sensitivity Selector: Allows clustering of music by era or vibe—ideal for sensory designers curating layered experiences.

- Private Mode: Ensures secure co-creation, allowing brands or artists to work confidentially on new projects.

Primary Use Cases

- Artist Monetization: Passive income through style licensing.

- Sonic Branding: Consistent sound identity across installations, campaigns, or XR experiences.

- Film and Game Scoring: Generate music that matches pre-existing sensory themes.

- Personal Workflow Acceleration: Rapid prototyping for producers working on immersive soundscapes.

This feature directly aligns with rising experiential audio expectations discussed in AI music trends and complements other Soundverse tools such as the AI Singing Generator, enabling seamless integration across disciplines.

Related Tools Driving Sensory Innovation

While Soundverse DNA leads in ethically trained sound modeling, other Soundverse tools enhance multi-sensory music creation:

- Voice to Instrument: Translates vocal expressions into instrument sounds—ideal for performers building personalized sonic textures.

- AI Music Generator: Produces high-quality instrumental layers that can form the foundation for immersive installations.

- AI Singing Generator: Creates experimental vocal timbres, from whispering to animalistic tones, expanding multisensory storytelling.

When combined, these tools support audio innovation across installations, virtual performances, and interactive art.

For further inspiration, see related guides like How to create AI-generated music and Generate music with vibe filters that demonstrate how creatives blend sensory layers today.

Start Creating Multi-Sensory Music with AI Today!

Unlock the power of Soundverse to craft immersive compositions that blend sound, emotion, and technology. Experience next-generation tools that let you shape music as a complete sensory journey.

Related Articles

- How AI-Generated Music Is Transforming the Music Industry — Discover how artificial intelligence is redefining creativity and production across modern music ecosystems.

- Soundverse AI Revolutionizing Music Creation for New-Age Content Creators — Explore how Soundverse empowers creators to produce professional-grade music instantly using AI-assisted innovation.

- The Role of AI Music in Film and Television — Learn how AI-generated soundtracks are enhancing emotional depth and storytelling in visual media.

- AI Music Generator and Human Composers: A Future Together — See how collaboration between human artistry and AI technology is shaping tomorrow’s musical landscape.

Here's how to make AI Music with Soundverse

Video Guide

Here’s another long walkthrough of how to use Soundverse AI.

Text Guide

- To know more about AI Magic Tools, check here.

- To know more about Soundverse Assistant, check here.

- To know more about Arrangement Studio, check here.

Soundverse is an AI Assistant that allows content creators and music makers to create original content in a flash using Generative AI. With the help of Soundverse Assistant and AI Magic Tools, our users get an unfair advantage over other creators to create audio and music content quickly, easily and cheaply. Soundverse Assistant is your ultimate music companion. You simply speak to the assistant to get your stuff done. The more you speak to it, the more it starts understanding you and your goals. AI Magic Tools help convert your creative dreams into tangible music and audio. Use AI Magic Tools such as text to music, stem separation, or lyrics generation to realise your content dreams faster. Soundverse is here to take music production to the next level. We're not just a digital audio workstation (DAW) competing with Ableton or Logic, we're building a completely new paradigm of easy and conversational content creation.

TikTok: https://www.tiktok.com/@soundverse.ai

Twitter: https://twitter.com/soundverse_ai

Instagram: https://www.instagram.com/soundverse.ai

LinkedIn: https://www.linkedin.com/company/soundverseai

Youtube: https://www.youtube.com/@SoundverseAI

Facebook: https://www.facebook.com/profile.php?id=100095674445607

Join Soundverse for Free and make Viral AI Music

We are constantly building more product experiences. Keep checking our Blog to stay updated about them!