How to Make Music That Adapts to Biometric Data

How to Make Music That Adapts to Biometric Data

In 2026, adaptive music technology has become one of the most exciting intersections between health, creativity, and artificial intelligence. Whether it’s music that syncs with your heart rate, tracks that respond to emotional states, or generative soundscapes based on your body temperature, adaptive sound design is transforming how we experience music. For producers, sound designers, and developers alike, creating heart rate music or health data audio experiences opens up new creative and commercial opportunities.

What is adaptive music technology?

Adaptive music technology refers to systems that modify musical elements—like tempo, key, intensity, or instrumentation—in response to dynamic input. These inputs can come from a listener’s physical or psychological state, often measured through biometric sensors such as heart rate monitors, skin conductance sensors, or even EEG headbands.

In 2026, adaptive soundtrack systems are increasingly used in wellness apps, gaming environments, and immersive experiences. From meditation platforms that respond to breathing patterns to video games that shift music intensity with player stress levels, this approach enhances immersion and emotional engagement.

Why is biometric-adaptive music gaining popularity in 2026?

Technological integration between wearables, smart devices, and software ecosystems has made adaptive music more accessible this year. Smartwatches, fitness trackers, and even AR/VR headsets now provide continuous health data streams that can be used to generate music in sync with one’s physiology. For instance, companies developing wellness experiences are using health data audio to help users regulate stress or enhance focus by adjusting sound patterns to heart rate variability.

Moreover, music producers in 2026 have greater access to AI-powered composition tools that allow them to map biometric data to musical structures. The result is not static audio but dynamically responsive compositions that evolve in real time as the listener’s body state changes.

What are the core elements of creating biometric-based adaptive music?

- Biometric Input Source – This includes devices that generate physiological data such as heart rate, breathing rate, skin temperature, or EEG patterns.

- Data Mapping Architecture – Translating biometric values into musical parameters. For example, an increase in heart rate could correspond to a rise in tempo or intensity.

- Music Generation Engine – The software that produces and manipulates audio elements to reflect physiological shifts.

- Feedback System – Ensures that the music’s response loop maintains coherence, avoiding abrupt changes that disrupt the user experience.

The goal is to make music that reflects the listener’s state while still sounding intentional and artistically expressive. It’s both a technological and creative challenge.

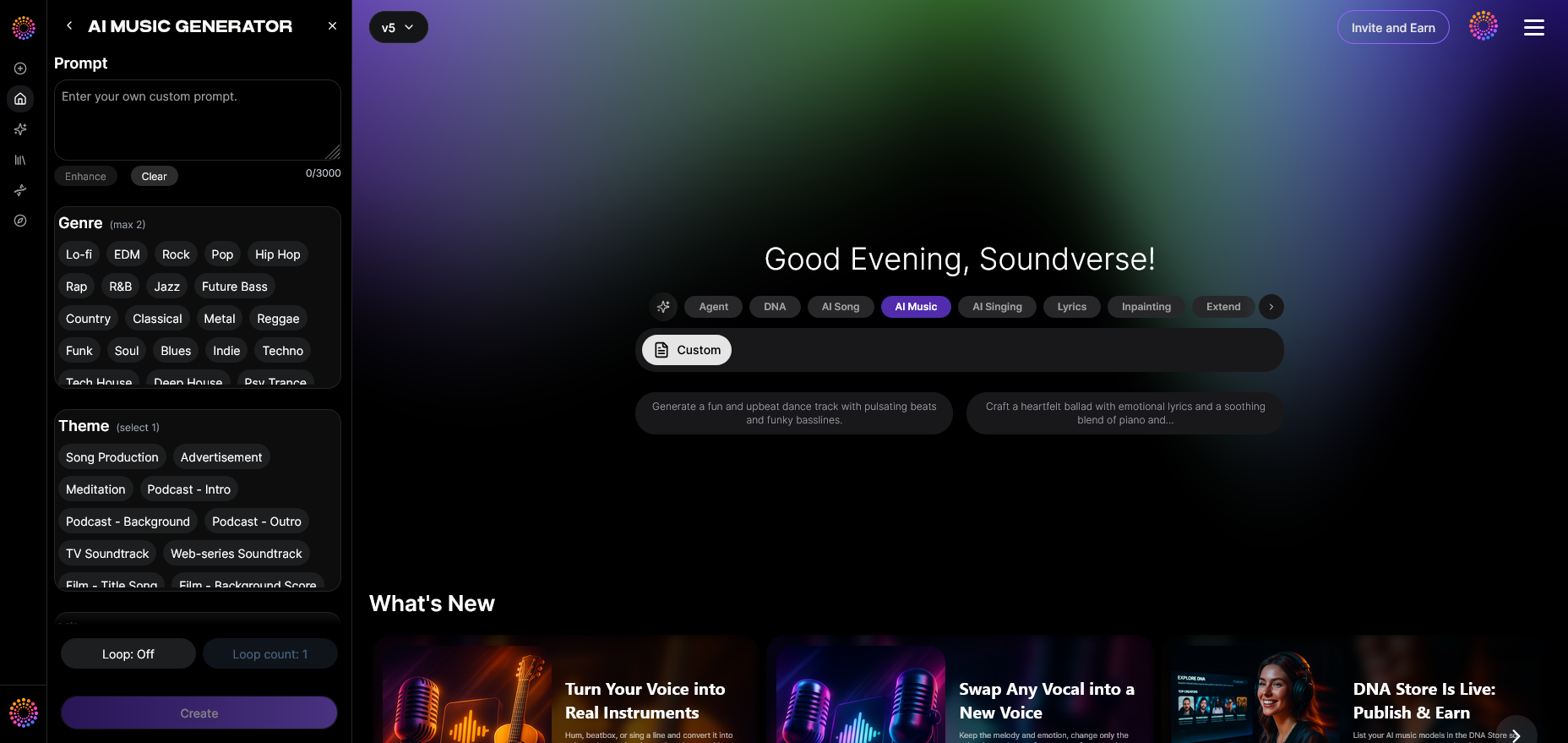

How to make adaptive biometric music with Soundverse AI Music Generator

Soundverse’s AI Music Generator provides a robust foundation for crafting adaptive music using biometric inputs. Designed to create fully produced instrumental compositions from text descriptions, it excels at generating high-quality, loopable tracks suitable for games, meditation, and wellness apps.

Core features:

- Text-to-music generation for creating instrumental music without vocals.

- Loop Mode for seamless, continuous playback—perfect for adaptive experiences needing uninterrupted sound flow.

- Fine-tuned control over genre, mood, and instruments, letting you tailor compositions for calm, energetic, or meditative states.

- Selectable V4 and V5 model options for nuanced, stylistically diverse results.

For a deeper dive into Soundverse’s functionality, watch our guide on how to make music or explore the “Explore” tab tutorial from the Soundverse Tutorial Series.

Typical use cases:

- Wellness platforms: Generate tracks that adapt to heart rate and breathing data for meditation or relaxation apps.

- Games: Empower survival or adventure titles to adjust sound environments based on player tension and biometric feedback.

- Background scoring: Produce adaptive soundbeds that shift mood automatically, enhancing immersive storytelling.

When integrated with biometric data streams through the Soundverse API, developers can programmatically trigger new track generations or modifications whenever physiological metrics change. This workflow makes it possible to produce heart rate music and health data audio experiences that feel alive.

Step-by-Step Guide to Making Adaptive Biometric Music

Step 1: Feature Overview

Access the AI Music Generator from the main menu within your Soundverse workspace. This is the control center where you will configure your adaptive music concept before linking it to biometric data.

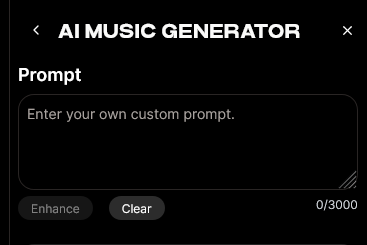

Step 2: Description Input

Enter a descriptive text prompt that defines your intended sound. For example, you might type: “Create a calm ambient soundscape with soft pads and slow rhythm suitable for meditation.” This description will serve as the musical foundation.

Step 3: Style & Duration

Select the desired style and duration for your music. Choose genres such as ambient, chill, or cinematic depending on your project’s context. Duration can align with physiological feedback cycles, such as one-minute segments that regenerate with new biometric data.

Step 4: Generation

Click Generate to produce your instrumental track. The Soundverse AI analyzes your prompt and outputs a fully mixed audio file consistent with the described mood and style.

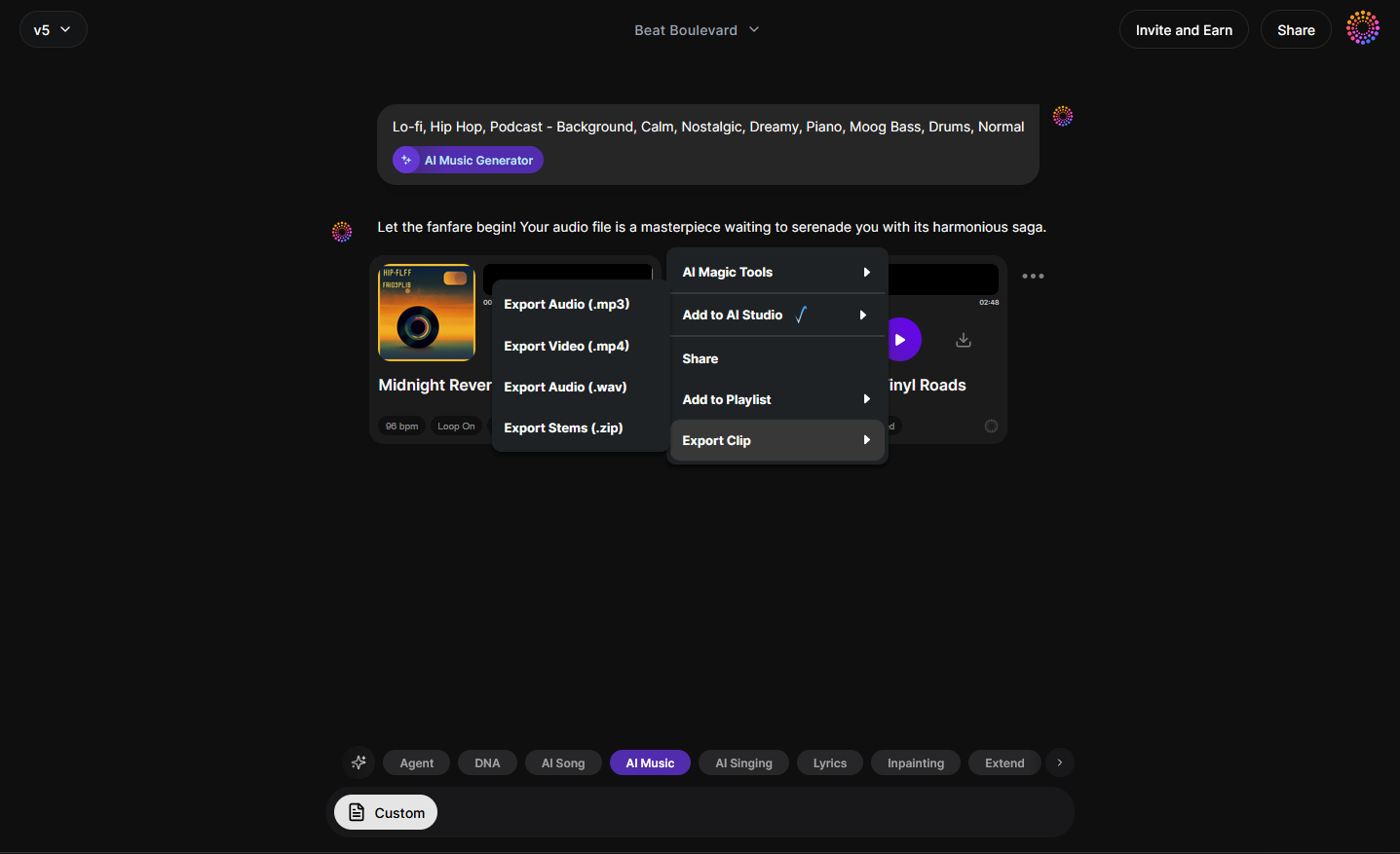

Step 5: Export Options

After generation, export your music in a preferred format. If you’re creating an adaptive soundtrack, you can integrate multiple generated loops corresponding to specific heart rate zones or emotional intensities.

Pro Tips for Building Adaptive Sound Systems

- Define dynamic mapping rules early – Before composing, decide how different biometric signals will influence musical parameters like tempo or key.

- Use loop-based composition – Loopable tracks enable seamless adaptation without abrupt transitions.

- Test across multiple biometric ranges – Ensure the adaptive system produces emotionally coherent results even when heart rate or stress levels fluctuate sharply.

- Combine data streams – Use multiple biometric inputs, such as respiration and heart rate, for richer and more organic variation.

- Leverage the Soundverse API – This interface lets developers trigger modifications programmatically when new sensor readings arrive.

How does adaptive music impact user experiences?

Adaptive soundtrack design deepens emotional engagement. When a fitness app raises its music’s tempo as your heart rate climbs, or when meditation music gradually fades into softer tonalities as your breath slows, a feedback loop enhances focus and well-being. This is why adaptive music technology has become central in mental health and fitness domains in 2026.

Interactive installations, museums, and therapeutic clinics also use these systems to increase immersion and personalization. A hospital relaxation room might, for example, deploy adaptive ambient music tailored to patients’ real-time pulse data, providing comfort without requiring manual control.

Related Learning and Resources

If you’re exploring the creative potential of AI-driven composition, check out additional guides on Soundverse’s blog, including How to Create Healing Meditation Music with Soundverse AI, Generate AI Music with Soundverse Text-to-Music, and How AI-Generated Music is Transforming the Music Industry. You can also explore platform comparisons such as Soundraw Alternative and Mubert Alternatives Soundverse for context.

Start Creating Music That Adapts to Every Beat of You

Experience the future of sound with Soundverse’s adaptive music technology. Whether driven by emotion or biometric feedback, create songs that evolve in real time to your energy and mood.

Try Soundverse Free

Related Articles

- How AI-Generated Music Is Transforming the Music Industry: Discover how AI tools are changing the way music is produced, composed, and experienced worldwide.

- Soundverse AI Revolutionizing Music Creation for New Age Content Creators: Learn how Soundverse empowers modern creators to generate innovative, customizable tracks effortlessly.

- The Role of AI Music in Film and Television: Explore how AI-generated music enhances storytelling and adapts dynamically to visual cues.

- How to Make AI-Generated Music: A step-by-step guide to creating your first track using AI-powered music generation tools.

Here's how to make AI Music with Soundverse

Video Guide

Here’s another long walkthrough of how to use Soundverse AI.

Text Guide

- To know more about AI Magic Tools, check here.

- To know more about Soundverse Assistant, check here.

- To know more about Arrangement Studio, check here.

Soundverse is an AI Assistant that allows content creators and music makers to create original content in a flash using Generative AI.

With the help of Soundverse Assistant and AI Magic Tools, our users get an unfair advantage over other creators to create audio and music content quickly, easily and cheaply.

Soundverse Assistant is your ultimate music companion. You simply speak to the assistant to get your stuff done. The more you speak to it, the more it starts understanding you and your goals.

AI Magic Tools help convert your creative dreams into tangible music and audio. Use AI Magic Tools such as text to music, stem separation, or lyrics generation to realise your content dreams faster.

Soundverse is here to take music production to the next level. We're not just a digital audio workstation (DAW) competing with Ableton or Logic, we're building a completely new paradigm of easy and conversational content creation.

TikTok: https://www.tiktok.com/@soundverse.ai

Twitter: https://twitter.com/soundverse_ai

Instagram: https://www.instagram.com/soundverse.ai

LinkedIn: https://www.linkedin.com/company/soundverseai

Youtube: https://www.youtube.com/@SoundverseAI

Facebook: https://www.facebook.com/profile.php?id=100095674445607

Join Soundverse for Free and make Viral AI Music

We are constantly building more product experiences. Keep checking our Blog to stay updated about them!